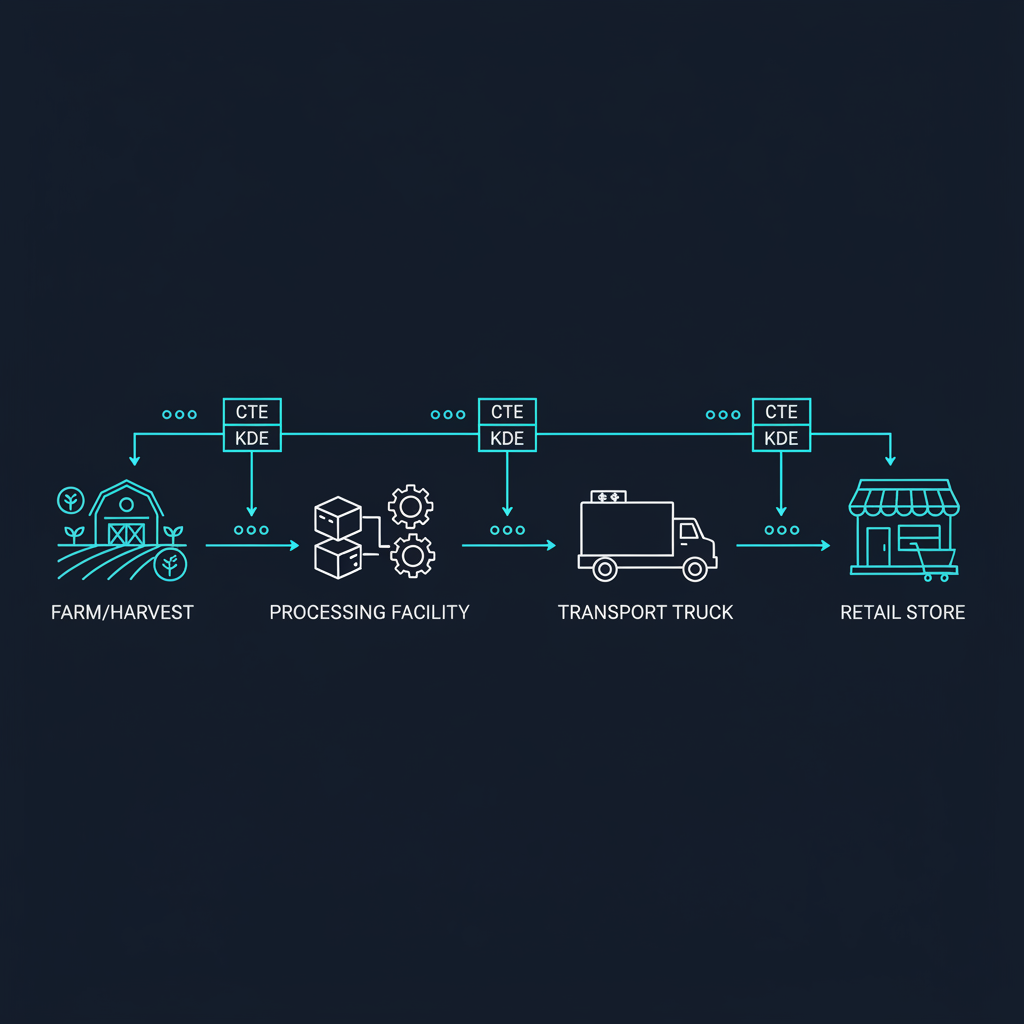

Cold chain traceability data architecture is the structured system of data models, sensor pipelines, and event schemas that captures every Critical Tracking Event (CTE) and its associated Key Data Elements (KDEs) across the temperature-controlled supply chain—from harvest or manufacturing through transport to final delivery. Unlike a compliance checklist, a data architecture defines how traceability information flows, where it lives, and how fast it can be retrieved when an auditor or the FDA asks for it.

I've spent over 20 years in IoT hardware and supply chain visibility, deploying sensor networks across 100+ countries. The most common failure I see isn't a missing thermometer—it's a missing data model. Companies invest heavily in sensors and dashboards but rarely design the data layer that connects device telemetry to regulatory evidence. This article is not a legal overview of FSMA 204. It's a systems architecture article written for the engineers and operations leaders who will actually build the traceability infrastructure.

What the FSMA 204 Delay Actually Means

In March 2025, the FDA extended the FSMA 204 compliance deadline by 30 months—from January 20, 2026 to July 20, 2028. Congress subsequently directed the FDA not to enforce the Food Traceability Rule before that date. The core requirements remain entirely unchanged.

The extension happened because the FDA recognized that even well-prepared companies couldn't comply in isolation. FSMA 204 requires coordinated data sharing across entire supply chains. When your compliance depends on receiving accurate KDEs from upstream partners who aren't ready, the system breaks down regardless of your own readiness.

Key Takeaway: The delay is not a pause signal. It's a 30-month engineering window. Companies that use this time to build proper data architecture will become preferred suppliers. Those that wait will face compressed timelines, higher costs, and reduced market access.

Some retailers are already requiring traceability data within two hours—not the FDA's 24-hour window. Procurement teams are adding "FSMA 204 ready" as a qualification criterion in RFPs. The regulatory deadline may be 2028, but the market deadline is moving faster.

Where the Data Breaks: Identifying Your Traceability Gaps

Most cold chain operations have data. The problem is that they have the wrong kind of data, stored in the wrong format, disconnected from the wrong systems. Here are the five most common data breakpoints I encounter:

Handoff gaps. Every time a product changes custody—from grower to processor, processor to distributor, distributor to retailer—there's a data boundary. Most companies track what they send but not what the receiver confirms. FSMA 204's CTE model requires both sides of every handoff to be documented with matching lot codes, timestamps, and locations.

Format fragmentation. One supplier sends lot codes in a CSV attached to an email. Another embeds them in EDI 856 ASN messages. A third prints them on packing slips that someone manually keys into an ERP. When the FDA requests an electronic sortable spreadsheet within 24 hours, these disconnected formats collapse.

Sensor-to-record disconnect. IoT temperature sensors generate continuous time-series data—readings every 30 seconds, every minute, every 5 minutes. But FSMA 204 doesn't require continuous telemetry. It requires specific KDEs at specific CTEs. The gap is the mapping layer: which sensor readings correspond to which tracking events, and how do you join them? Understanding how IoT devices are designed to produce structured, event-driven data rather than raw streams is critical to bridging this gap.

Lot code inconsistency. The traceability lot code (TLC) is the spine of FSMA 204. But lot coding practices vary wildly across the industry. Some companies assign lot codes at the pallet level. Others assign them at the case level. Some reuse lot codes across production runs. Without a consistent, unique, and traceable lot code standard, the entire data architecture fails.

Time zone and timestamp drift. When sensors, ERP systems, warehouse management systems, and transport management systems each use different time references, correlating events across the supply chain becomes unreliable. A temperature excursion logged at "14:00" means nothing without knowing whether that's UTC, local time, or the sensor's internal clock (which may have drifted).

The Right Data Model for Cold Chain Traceability

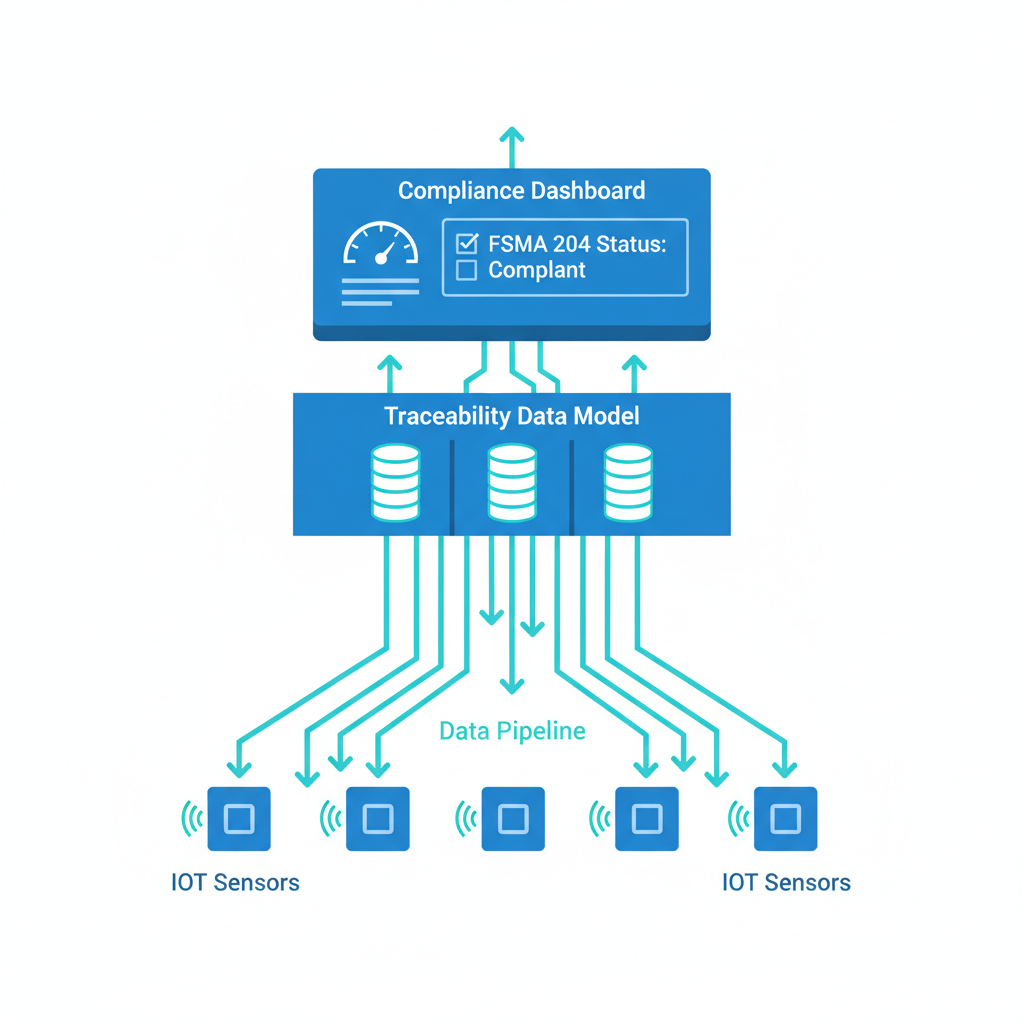

FSMA 204 defines a specific set of CTEs and KDEs. Your data model should mirror this structure directly rather than trying to retrofit existing ERP schemas. Here's the architecture I recommend:

Entity layer. Define your core entities: Location (with FDA-recognized identifiers like GLN or FFRN), Product (with GTIN or equivalent), Lot (with unique TLC), and Actor (the legal entity performing each action).

Event layer. Each CTE becomes a first-class event object with a standardized schema. The seven core CTEs for most cold chain operations include growing, receiving, transforming, creating, shipping, receiving, and first land-based receiver events. Each event captures: who performed it, what product and lot were involved, where it happened, when it happened, and from/to whom the product moved.

Telemetry layer. This is where IoT sensor data lives—temperature, humidity, shock, light exposure, GPS coordinates. The telemetry layer doesn't replace the event layer. It enriches it. The key design decision is how to link sensor readings to CTE records. I recommend a session-based approach: each shipment or storage period gets a telemetry session ID that links to the corresponding shipping or receiving CTE.

| Layer | Purpose | Key Fields | Update Frequency |

|---|---|---|---|

| Entity | Master data for locations, products, lots | GLN, GTIN, TLC, FFRN | Low (days/weeks) |

| Event (CTE) | Record each custody/transformation event | Event type, timestamp, actor, lot, location | Per transaction |

| Telemetry | Continuous sensor data linked to events | Session ID, sensor ID, value, timestamp (UTC) | Seconds to minutes |

| Evidence | Audit-ready exports and anomaly records | Report ID, query parameters, generation timestamp | On demand |

Evidence layer. This is the layer most companies forget. FSMA 204 requires that you produce an electronic sortable spreadsheet within 24 hours of an FDA request. The evidence layer pre-computes or rapidly generates these exports by joining entity, event, and telemetry data. Design this layer from day one—don't bolt it on later.

Real-Time Telemetry vs. Reconstructed Records

There's a fundamental architectural choice every cold chain operation must make: do you capture traceability data in real time as events happen, or do you reconstruct records after the fact from existing systems?

Real-time capture means IoT sensors, barcode scanners, and automated systems generate CTE records as products move through the supply chain. A receiving event is created when a pallet is scanned at the dock door. Temperature data streams continuously from in-transit sensors. This approach produces the most accurate and complete data, but it requires significant upfront infrastructure investment.

Reconstructed records means compiling traceability data from purchase orders, invoices, shipping documents, and warehouse logs after the fact. Many companies will start here because they lack real-time infrastructure. The risk is data loss: if a handoff wasn't documented at the time it happened, you can't reconstruct what you didn't capture.

The pragmatic approach for most organizations is a hybrid model. Use real-time capture for high-value, high-risk CTEs—receiving events and shipping events where custody changes—and supplement with batch processing for internal transformation and storage events. Over the 30-month implementation window, progressively shift more CTEs to real-time capture as infrastructure matures.

For the IoT telemetry layer specifically, real-time is non-negotiable. Temperature excursions don't wait for batch processing. A multi-sensor monitoring device that captures temperature, humidity, shock, and light exposure in real time—and transmits data via LTE-M or NB-IoT—provides the continuous evidence chain that auditors look for. The sensor data should stream into a time-series database (InfluxDB, TimescaleDB, or equivalent) with the telemetry session ID linking each reading to its parent CTE.

Designing for Anomalies, Not Just Normal Operations

The most revealing test of any traceability architecture isn't how it handles normal operations—it's how it handles exceptions. A temperature excursion at 2 AM on a holiday weekend. A shipment that arrives with a mismatched lot code. A sensor that stops reporting mid-transit. These are the scenarios your data model must accommodate.

Excursion records. When a temperature threshold is breached, your system should automatically generate an anomaly record that captures: the sensor reading that triggered the alert, the duration of the excursion, the product and lot affected, the corrective action taken (or not taken), and the disposition decision. This anomaly record links back to the relevant CTE and telemetry session.

Gap detection. If a sensor stops reporting for more than a configurable threshold (say, 15 minutes), the system should flag a data gap. Data gaps are audit red flags. Your architecture should distinguish between "no data" (sensor failure) and "data within range" (normal operation) so that you never present absence of evidence as evidence of compliance.

Reconciliation failures. When a receiving CTE's lot code doesn't match the corresponding shipping CTE, the system should generate a reconciliation exception rather than silently accepting the mismatch. These exceptions become your early warning system for supply chain data quality issues.

The 90-Day Implementation Roadmap

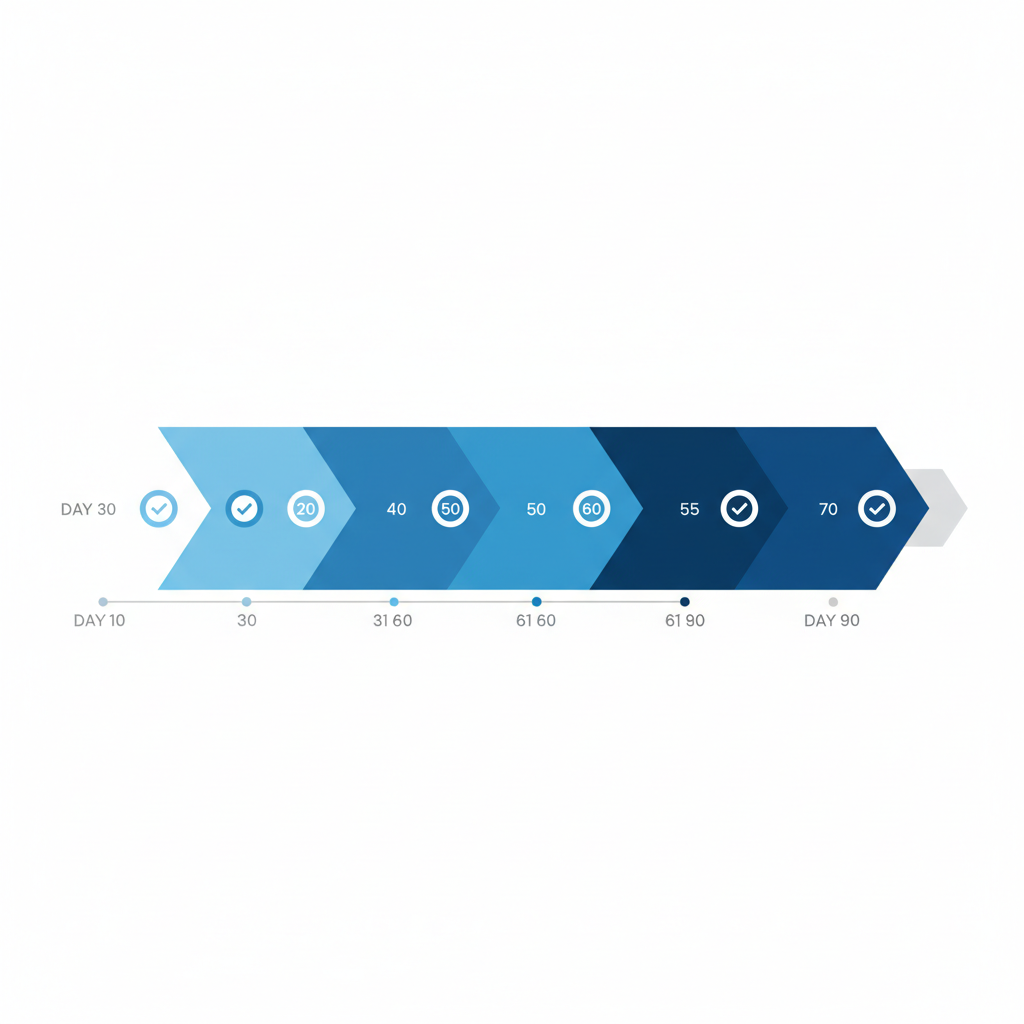

You have 30 months until the July 2028 deadline, but a focused 90-day sprint can establish the foundation. Here's how I'd structure it:

Days 1–30: Audit and Map. Walk every CTE in your operation. Document where data is generated, what format it's in, and where the gaps are. Identify your highest-risk products on the Food Traceability List—fresh leafy greens, soft cheeses, fresh-cut fruits, shell eggs, certain seafood. Map your supply chain partners and assess their readiness. The deliverable is a data gap analysis showing exactly which KDEs you can produce today and which require new systems.

Days 31–60: Design and Prototype. Build your four-layer data model: entity, event, telemetry, evidence. Choose your technology stack—this doesn't need to be complex. A relational database for entity and event data, a time-series store for telemetry, and a reporting engine for the evidence layer. Deploy IoT sensors on one product line for one route as a proof of concept. Validate that you can generate a sortable spreadsheet from the combined data within the 24-hour requirement.

Days 61–90: Partner Integration and Validation. The hardest part of FSMA 204 isn't internal systems—it's getting accurate KDEs from your supply chain partners. Use this phase to onboard your top 3–5 partners. Define the data exchange format (GS1 EPCIS is the industry standard, but a well-defined CSV template works for smaller partners). Run a mock FDA trace-back exercise: pick a lot code and try to reconstruct its complete journey from source to shelf within 24 hours. Document what worked and what broke.

After this 90-day sprint, you'll have a working prototype, a clear technology roadmap, and—most importantly—a realistic understanding of how much work remains before July 2028.

Key Takeaways

- The FSMA 204 compliance delay to July 2028 is an engineering window, not a permission to pause. Market-driven requirements from major retailers are moving faster than the regulation.

- The most common traceability failures are data architecture problems—handoff gaps, format fragmentation, sensor-to-record disconnects—not missing hardware.

- Build a four-layer data model: entity, event (CTE), telemetry, and evidence. Design the evidence layer from day one.

- Use a hybrid approach: real-time capture for custody-change CTEs and continuous IoT telemetry, batch processing for internal events initially.

- Design for anomalies first. Excursion records, gap detection, and reconciliation failures are what auditors actually scrutinize.

- A focused 90-day sprint—audit, prototype, partner integration—builds the foundation for full compliance well before the deadline.

What's the difference between FSMA 204 and previous traceability requirements?

Previous regulations under the Bioterrorism Act required only "one-up, one-back" tracing—knowing your immediate supplier and immediate customer. FSMA 204 requires end-to-end traceability with specific KDEs at each CTE for foods on the Food Traceability List, and the ability to produce electronic sortable records within 24 hours of an FDA request.

Do I need IoT sensors to comply with FSMA 204?

FSMA 204 does not mandate any specific technology—it doesn't require electronic recordkeeping or IoT sensors. However, the practical reality of producing complete, accurate traceability records within 24 hours makes manual systems extremely difficult to scale. IoT sensors with real-time data transmission are the most reliable way to maintain continuous temperature evidence for cold chain operations.

How does the data architecture differ for domestic vs. foreign suppliers?

The requirements apply equally to domestic operations and foreign firms shipping food to the U.S. However, foreign suppliers often face additional challenges: different data standards, time zone complexity, and connectivity limitations during ocean transit. Your data architecture should account for delayed data synchronization from international partners while still meeting the 24-hour response window.

What happens if my supply chain partners aren't ready?

This is precisely why the FDA extended the deadline. Your traceability data is only as complete as your weakest partner's data. Start partner onboarding early, define minimum data exchange requirements, and provide templates or tools to reduce the burden on smaller suppliers. Consider GS1 EPCIS as a common data standard where possible.

Can existing ERP systems handle FSMA 204 compliance?

Most ERP systems can store the required data elements, but they weren't designed for the specific CTE/KDE structure that FSMA 204 requires. The gap is usually in the event model—ERPs track transactions, not traceability events—and in the rapid export capability. A middleware layer that maps ERP transactions to CTEs and enables fast querying is often the most practical approach.

The cold chain traceability challenge is fundamentally a data architecture problem, not a sensor problem or a compliance checkbox exercise. If you're thinking through your own traceability data model and want to discuss the IoT sensor layer, I'd welcome the conversation.

Further reading: Why GPS-Only Trackers Fail Pharmaceutical Cold Chain Compliance